Sam Altman’s 13-page policy blueprint, titled ‘Industrial Policy for the Intelligence Age,’ outlines measures such as auto-triggering safety nets, containment strategies for rogue AI, and direct dividends for citizens from AI-driven economic growth. Altman emphasized that this document serves as a starting point for discussion rather than a definitive solution.

In a newly published policy document, OpenAI has called for extensive economic reforms designed to prepare society for the impending era of superintelligence. Among the proposals are taxes on automated labor, the establishment of a national public wealth fund partially funded by AI companies, and trials for a 32-hour workweek.

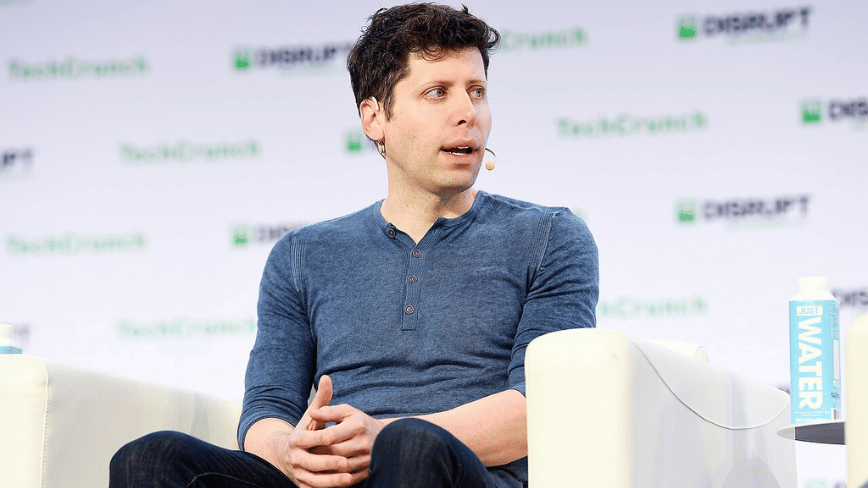

The document, entitled ‘Industrial Policy for the Intelligence Age: Ideas to Keep People First,’ has been released just as Congress gears up to debate legislation surrounding artificial intelligence. During an exclusive interview, CEO Sam Altman conveyed that the transformations anticipated from AI are comparable to those witnessed during the Progressive Era and the New Deal. He highlighted two immediate threats posed by advanced AI: potential cyberattacks and bioweapons.

Among the most innovative proposals is the creation of a public wealth fund. OpenAI advocates for a government-managed fund, which would receive contributions from AI companies and invest in AI businesses and other sectors integrating the technology. The profits from this fund would be distributed directly to American citizens, drawing a parallel to Alaska’s Permanent Fund that provides annual dividends to residents from oil revenues.

Regarding labor, the document suggests implementing taxes on automated labor and shifting the tax framework from payroll to capital gains and corporate income. This change acknowledges the possibility that AI could significantly reduce the wage-and-payroll revenue that currently supports Social Security.

The proposed 32-hour workweek is presented as an ‘efficiency dividend’ derived from productivity gains attributed to AI advancements.

The policy document also details ‘containment playbooks’ for scenarios where dangerous AI systems become self-sustaining and replicative. OpenAI recognizes situations where such systems ‘cannot be easily recalled’ and proposes that government coordination be the mechanism for response.

Additionally, the blueprint envisions automatic triggers for safety nets: if metrics indicating AI-driven job displacement reach predefined levels, benefits such as unemployment payments and wage insurance would automatically increase, only to taper off once conditions stabilize.

Altman expressed to Axios that a major cyberattack facilitated by near-future AI models is ‘totally possible’ within the next year, while the possibility of AI models being used to develop novel pathogens is ‘no longer theoretical.’

Altman candidly discussed the dual nature of OpenAI’s position, noting that the company is at the forefront of developing the very technology it aims to regulate. By positioning itself as a responsible entity proposing solutions, OpenAI seeks to influence the regulatory landscape before it is dictated by external forces, a strategy that resembles the approach taken by its competitor Anthropic.

This policy paper emerges at a critical juncture for OpenAI, which is preparing for an initial public offering (IPO) and has recently completed a $110 billion private funding round, all while facing scrutiny over its transition from a non-profit organization.

Whether OpenAI’s advocacy is driven by genuine altruism or strategic intent, Altman stated, ‘Some will be good. Some will be bad. But we do feel a sense of urgency. And we want to see the debate of these issues really start to happen with seriousness.’